Over the past four decades, scientists have slowly built up a library of dolphin vocalizations using hydrophones, spectrograms, and countless hours of observation. Now, a new artificial intelligence project aims to dramatically speed up this process and perhaps even allow two-way communication between humans and dolphins.

The project, called DolphinGemma, is the result of a collaboration between marine biologist Dr. Denise Herzing, founder of The Wild Dolphin Project (WDP), and Dr. Thad Starner, a research scientist at Google DeepMind. DolphinGemma is described as the first large language model (LLM) designed specifically to understand dolphin vocalizations. It builds upon the same foundational technology that powers Google’s Gemini language models.

Decades of dolphin data

Herzing and her team at WDP have been studying a single pod of wild Atlantic spotted dolphins off the coast of the Bahamas since the 1980s. Over the years, they’ve compiled an extensive audio library of dolphin communication, including whistles (some of which may serve as individual names), echolocation clicks used for navigation and hunting, and burst-pulse sounds used in social interactions.

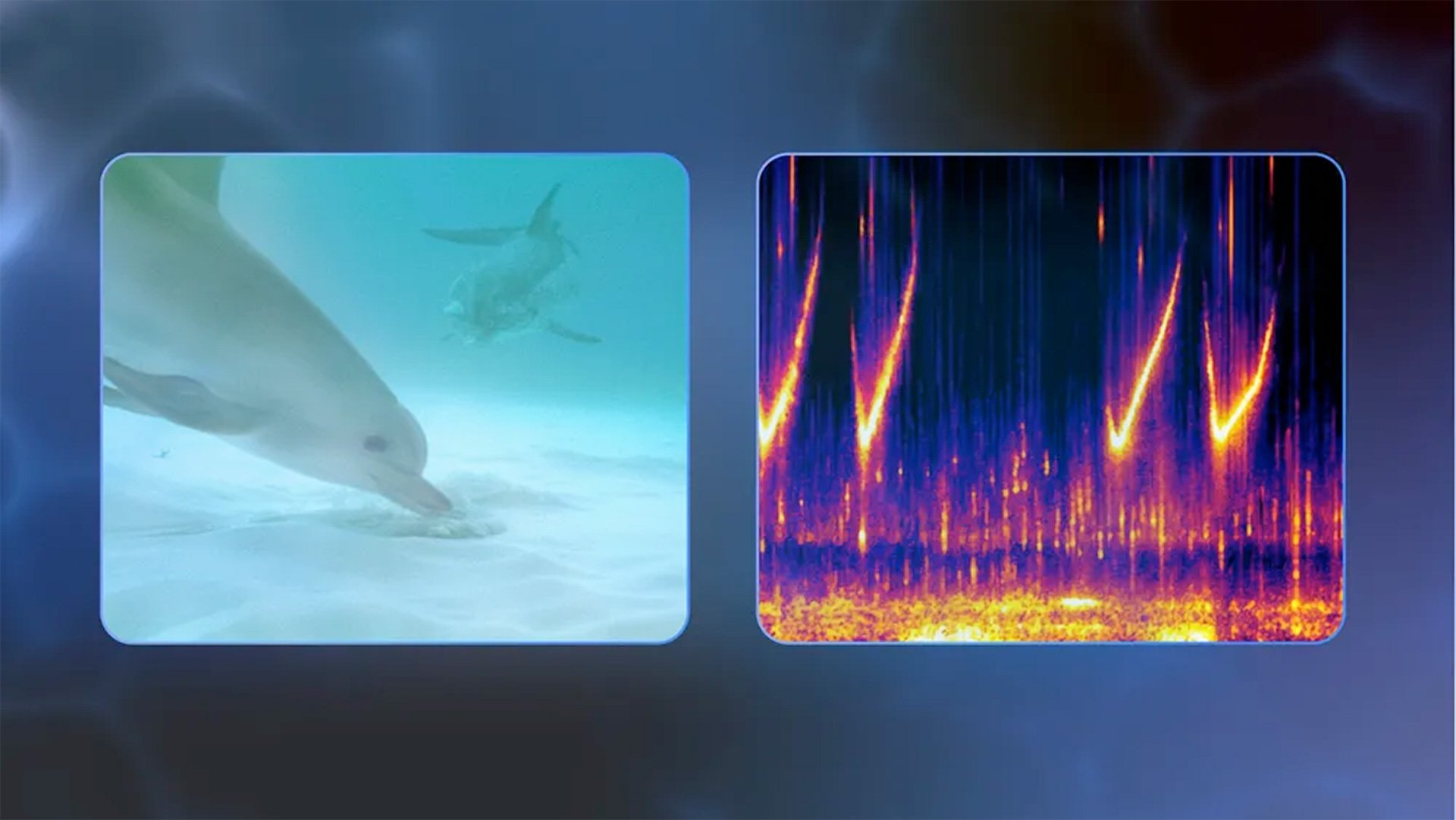

Traditionally, researchers have used hydrophones to record these sounds and spectrograms to analyze their frequency patterns. They would then play the sounds back to the dolphins and observe their reactions. While this approach has yielded insights, it has been an extremely slow and manual process.

The DolphinGemma model changes that dynamic. Trained on WDP’s vast database, the LLM can detect patterns and structures in dolphin communication by representing sounds as “tokens” and analyzing sequences to predict what might come next. “Feeding dolphin sounds into an AI model like DolphinGemma will give us a really good look at if there are patterns, subtleties that humans can’t pick out,” Herzing explained.

Borrowing tricks from chatbots

The model borrows techniques used in large language models to predict the next word or phrase in human conversation. Similarly, DolphinGemma analyzes dolphin vocal sequences and can suggest what sounds are likely to follow. This could help researchers identify recurring sound structures, assign meaning to specific sequences, and potentially interpret the intent behind them.

Google notes that the system functions much like a text predictor on your smartphone—only here, instead of words, it’s forecasting clicks and whistles.

The team hopes that as the model processes more data, it will become increasingly accurate in recognizing specific communication patterns. Importantly, the system is designed to be scalable. It can be fine-tuned to incorporate additional data, including from other dolphin species such as bottlenose or spinner dolphins.

Exploring two-way communication

While decoding dolphin language is one part of the project, another major goal is to enable two-way communication. Dr. Starner, who is also a professor at the Georgia Institute of Technology and one of the original developers of Google Glass, has been working with Herzing on interactive systems for years.

In the 1990s, researchers tried using a floating keyboard with large buttons, each corresponding to a specific artificial dolphin whistle and object. The idea was that dolphins could “press” buttons with their rostrum (snouts) to request toys or other items. While novel, the setup was too static and did not lead to meaningful back-and-forth communication.

In 2010, the WDP team transitioned to an underwater keyboard that divers could swim with, making the interaction more dynamic. That same year, they began development of a wearable system called CHAT (Cetacean Hearing and Telemetry) with Georgia Tech.

CHAT was designed as a diver-wearable computer system that allowed for real-time interaction. It featured underwater microphones (hydrophones) to record dolphin vocalizations, speakers to play back artificial whistles, and an interface that researchers could use to engage with dolphins in the water.

The key idea behind CHAT was associating specific dolphin-made or AI-generated sounds with objects and actions. For example, a toy would be handed over to a dolphin while a particular whistle played. If the dolphin mimicked the sound later, the system would inform the researcher (via bone-conducting headphones) which object it appeared the dolphin was “requesting.” The researcher could then provide the associated toy, reinforcing the learning loop.

Upgraded tools, smarter AI

Today’s version of CHAT is more compact and accessible than its predecessors, thanks to the integration of Google Pixel smartphones. These off-the-shelf devices make the system easier to maintain, lighter to carry, and less power-hungry. The phones help run the AI models and manage the vocalization prediction processes in real-time.

With DolphinGemma powering the backend and improved wearable tech for data collection, researchers are now equipped to react more quickly to dolphin interactions and conduct more natural engagement sessions. This enhanced interactivity could open the door to discovering how dolphins use sound not just for socializing, but potentially to share information about their surroundings or intentions.

Herzing’s team plans to deploy the DolphinGemma model in their upcoming field research season. According to Google, the model is also expected to be released as an open model later this year, making it accessible to other researchers around the world. That would allow for broader testing across other dolphin species and regional populations, accelerating global understanding of cetacean communication.

What do dolphins talk about?

One of the more fascinating possibilities raised by this work is the idea that dolphins may have not only language, but culture. “If dolphins have language, then they probably also have culture,” Starner said. “You’re going to understand what priorities they have, what do they talk about?”

This raises broader questions about how non-human species think, socialize, and organize themselves. Language is often seen as a marker of intelligence and societal complexity. If models like DolphinGemma can help researchers uncover structured patterns in dolphin conversations, it may lead to new understandings about how intelligent life manifests in other species.

The team acknowledges that the road to fluent two-way communication is still long. But with tools like DolphinGemma and CHAT, researchers are inching closer to that possibility—one whistle at a time.

Source: The Keyword (Google)